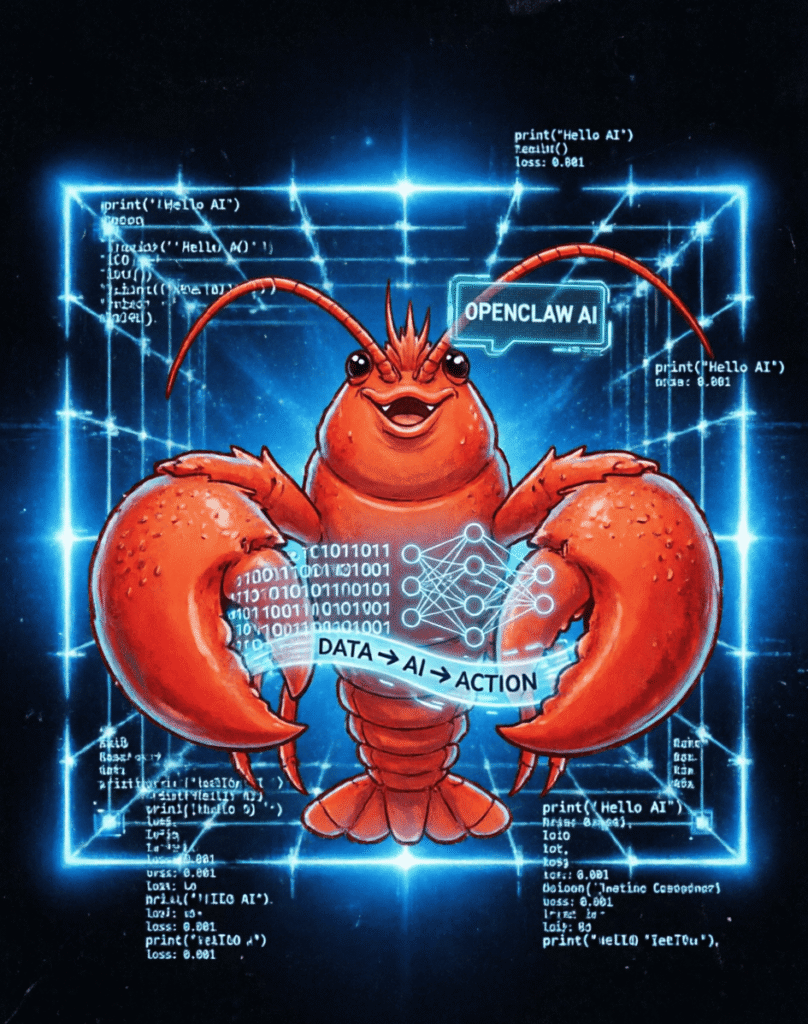

Recently, OpenClaw has rapidly gained popularity worldwide, sparking a global craze for “raising lobsters”. As a new-generation agent that takes AI from “interaction” to “autonomous operation”, it delivers efficiency gains while exposing multiple security hazards.

Our center has outlined core risks across five dimensions: technical architecture, cyberattacks, data privacy, legal compliance, and ecological security, urging users and enterprises to stay highly vigilant.

I. Guard Against AI “Insiders”: Uncontrolled Permissions Risk System Takeover

Blurred permission boundaries with severe overreach risks

OpenClaw requires continuous background operation and autonomous access to system resources, and lacks strict permission isolation and operation auditing by default. Attackers can exploit inducements or vulnerabilities to trick the AI into executing unauthorized commands, thereby gaining full control of user devices and systems.

AI hallucinations causing irreversible data damage

The model’s inherent “hallucination” problem is amplified when granted system operation permissions, potentially leading to accidental data deletion, tampering with critical configurations, execution of incorrect commands, and direct business and asset losses.

II. Beware of Silent Intrusions: Highly Concealed New Attack Methods

Prompt injection attacks

High-risk vulnerabilities exist in some small models and older versions. Attackers can craft malicious instructions to bypass security rules, trick the AI into leaking information or performing high-risk operations.

ClawJacked remote control vulnerability

By simply luring users to visit malicious web pages—without installing any software—attackers can remotely control locally running OpenClaw and achieve full device manipulation.

III. Avoid Digital Exposure: Total Privacy Leak Risks

Full-time monitoring of screens and behavior

OpenClaw relies on high-frequency screenshots and low-level system interfaces for automation. It can fully capture screen content and operation traces; once breached, sensitive information is completely exposed.

Massive credential and token leaks

Over 200,000 instances without strong authentication are exposed online. Attackers can steal tokens to abuse services, with some users seeing daily costs surge from tens to hundreds of yuan.

IV. Steer Clear of Responsibility Vacuums: Immature Laws and Standards

Prominent data compliance risks

Unclear boundaries for AI autonomous data collection and processing easily lead to data leaks and misuse. Current laws lack clear accountability for AI agency actions, making liability difficult to enforce when issues arise.

Lagging security assessment systems

Technological iteration outpaces regulation and standard development. Insufficient security assessment rules often result in a crude “launch first, secure later” model.

V. Prevent “Lobster Farming” Traps: Hidden Trust Crises in the Ecosystem

Malicious poisoning in the skill marketplace

Attackers batch-upload disguised malicious skills on the official ClawHub platform, which can steal data, control systems, and laterally infiltrate internal networks.

Backdoor risks in third-party installation services

High installation barriers have spawned paid installation services. Some third parties may implant Trojans or steal API keys, leaving users “paying to open the door” to intruders.

Overhyped craze misleading irrational investment

Domestic popularity far exceeds global levels, partly driven by tech anxiety and commercial hype, leading users to invest blindly while ignoring real value and security bottom lines.

Center Recommendations

OpenClaw marks a pivotal shift for AI from “talking” to “acting”, reflecting the vitality of the AI industry. However, new technologies must prioritize security.

We advise users:

- Do not install untrusted skills or use unofficial installation services.

- Enable strong authentication and strictly restrict AI permissions.

- Disable high-risk permissions such as unnecessary screenshotting and background access.

Enterprises should conduct security assessments and establish auditing and emergency response mechanisms.

Embrace the new AI trend while strengthening security defenses, so that intelligent agents become truly efficient assistants—not sources of uncontrolled risk.